Insights from the OpenRouter & a16z Empirical Study

The landscape of artificial intelligence is evolving at breakneck speed. Benchmarks and academic models are useful, but nothing reveals how AI is actually used in the real world like massive scale usage data. The State of AI: An Empirical 100 Trillion Token Study, produced by OpenRouter in partnership with Andreessen Horowitz (a16z), presents the largest empirical analysis of AI interactions to date — examining over 100 trillion tokens of real LLM (large language model) usage. This YouTube video breaks down the key findings of this study, showing how developers, enterprises, and end-users are interacting with LLMs today — and what those behaviors imply for the future of AI.

What “100 Trillion Tokens” Means

In the context of AI:

- A token is a unit of text or data processed by a model — roughly parts of words in natural language.

- 100 trillion tokens reflects real usage data across millions of developers, users, and applications running on the OpenRouter platform, spanning hundreds of models.

- This dataset far exceeds typical research benchmarks, offering an authentic view of how models perform in production.

Key Findings from the Study (Explained in the Video)

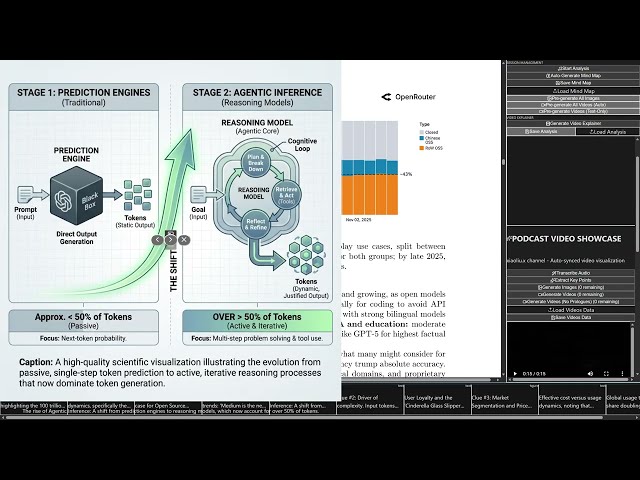

1. AI Use Has Shifted from Simple Tasks to Complex Workflows

The study shows that most interaction patterns today involve multi-step reasoning and agentic behavior — meaning users expect AI systems to plan, iterate, and execute sequences of tasks rather than just answer single queries. Classic “one-prompt/one-response” usage is no longer the dominant pattern.

2. Reasoning Models Now Dominate Token Usage

Models designed for deep reasoning and extended interaction — such as o1 (released in late 2024) and other next-gen LLMs — now account for more than half of all token usage. This reflects real demand for models that can think through problems and interact with tools rather than simply generate text.

3. Open-Source Models Are Growing Rapidly

While proprietary models still account for the bulk of usage, open-source models have expanded to roughly one-third of total traffic, and in some weeks nearly 30 % of usage is attributed to open-weight models. Open-source leaders such as DeepSeek, Qwen, and others have seen significant adoption because of cost efficiency, flexibility, and customization potential.

4. Use Cases Are Surprising

Contrary to the common assumption that AI is mainly about productivity tasks, the report reveals:

- Role-play and creative interaction account for a large share of open-source model usage.

- Coding assistance and developer workflows are among the top professional applications.

- AI usage patterns vary widely across demographics and tasks, indicating diverse user motivations.

5. Retention Patterns Suggest Long-Term Engagement

The researchers describe a phenomenon called the “Glass Slipper Effect”: once users find an AI model that fits their needs — especially for complex tasks — they tend to stick with it rather than switch back. This suggests loyalty and satisfaction tied to performance and task fit.

Why This Matters

This empirical analysis moves the narrative around AI from theory and benchmarks to real-world behavior. Traditional evaluations focused on accuracy scores and bench tests may not correlate with what developers and organizations actually find useful. The large-scale usage data shows that:

- The role of AI is expanding beyond assistants into active collaborators.

- Open-source ecosystems are becoming more competitive with proprietary offerings.

- AI demand is not monolithic — different models and approaches serve distinct user needs.

For AI builders, product managers, and technical leaders, these insights help inform strategic decisions around which capabilities to prioritize and where future innovation is likely to have the greatest impact.

Conclusion

The State of AI report, and this YouTube video summarizing its findings, highlights that the future of AI will be shaped by real usage patterns — not just benchmarks or theoretical models. With over 100 trillion tokens worth of interaction data, this study gives a robust picture of how models are used, what kinds of tasks are driving engagement, and how the ecosystem is shifting toward richer, more complex AI applications.

Leave a Reply